AI's cognitive crisis is being willfully ignored

We're in the early days of a mass cognitive crisis, obscured by capital forces in the same way our environmental crises have been obscured by the fossil fuel industry for decades. That the two operate according to the same morally-bereft framework is no surprise.

When exponential capital gains are on the table, the resulting damage to human and environmental health is viewed as nothing more than new opportunity for profit. This is peak disaster capitalism, a term coined by Naomi Klein that describes how the military industrial complex – that predatory and most deadly extension of capitalism – exploits disaster to spur capital growth. To capitalists, mo' problems equal mo' opportunity to sell "solutions". Each new iteration of disaster capitalism is a further abstraction from human well-being, where new horrors are being normalized at a rate most of us wouldn't have been able to conceive of only a few years ago.

We're being ground down, and AI is the pestle, meant to neutralize, naturally select, or eliminate whatever is left of our social agency while capitalists attempt to iterate themselves into a closed-loop system of total abstraction, ie: Cloud Capitalism – a digital economy built on massive compute infrastructure, where AI agents are both the labourers and the consumers. "Survival of the fittest", that absurdly human-centric social Darwinist philosophy, is being readily embraced by those who serve capital before humanity. Eugenics, something we previously relegated to the most corroded and fascist social models, is being openly embraced by the movement, something that's been revealed in detail by multiple academic bodies, including Oxford University in a paper titled, Building the ‘Fitter’ Future: Eugenics, Tech Capitalism, and the Politics of Existential Risk, stating, "tech capitalists and conservative elites deploy eugenic logics as ‘common sense’ solutions to social challenges such as climate change, ‘gender ideology,’ and fears of depopulation". We're living through that deployment now.

We're past the 'Platform Economy', and what it's evolved into is much bigger and much more dangerous.

In a paper titled Cloud Capitalism and the AI Transition, the authors state (bolding mine):

Reliance on advanced IT infrastructure has long been integral to a range of digital areas like cybersecurity, data processing, 3D video rendering, and semiconductor design. It is also increasingly essential for other fields such as genomics, financial simulations, oil and gas exploration, weather forecasting, and engineering. But it is absolutely central to the development of AI; indeed, the cloud is the foundational infrastructure upon which the entire AI ecosystem is being built. As such, the cloud business model is both a key enabler of AI development and, critically, the primary vehicle for its diffusion.

...the dominant belief underpinning AI advancement is that progress and the attainment of an all-powerful artificial general intelligence (AGI) depends on scale—more data, larger models, and above all, more compute. In this context, the computing capacity made available by the vast infrastructure of the cloud has become the most critical and contested resource. Competition in AI today is not so much about developing new intellectual property (researchers and AI companies often openly publish new innovations in their model design online) but having access to the capital—the physical infrastructure—to train and run the largest models with the most parameters.

Something I want to point out is that this new mode of capitalism doesn't rely on chatbots like ChatGPT, which for some reason, a lot of people associate data centres with. Consumer-facing AI products are a sliver of what constitutes this new model:

Departing from the previously dominant, consumer-facing, and asset-light platform model, cloud firms have become intensely asset-heavy, enterprise-oriented, collaborative, and vertically integrated operations. The top cloud players, which once thrived on data-driven, consumer-centric platforms, have increasingly shifted their focus toward providing sophisticated IT infrastructure services and specialized computational capabilities to a much smaller number of large enterprise clients. In doing so, these cloud giants have accumulated immense physical infrastructures—including data centers, specialized hardware, and even energy facilities—that constitute a much more capital-intensive digital economy.

We're past the framework of "data-driven". They already have tons of data. How much is 'tons'? Three years ago I wrote a piece called What is your data and where does it go? and at the time it was estimated the data industry would amass 2,142 zettabytes of data by 2035. That's 2,142,000,000,000,000,000,000,000 bytes. For scale context, one zettameter is about the diameter of the Milky Way galaxy. Big Data stats released December 2025 state, "the world is generating 181 zettabytes of data, an increase of 23.13% YoY, with 2.5 quintillion bytes created daily. That’s 29 terabytes every second, or 2.5 million terabytes per day."* Also worth noting, in 1999 "Big Data" was considered 1GB.

What they haven't had up until this iteration of Cloud Capitalism is the means to process and synthesize all that data. 70% of the world's data is user-generated*, and rest assured they already have the means to gather as much data as they could ever want, 'means' that we'll get into, but the industrial bottleneck is just having the infrastructure to process it at scale. Data centres are meant as massive compute-hubs – literally the digital brains of our civilizations. Which is why, as stated in the study above, "the computing capacity made available by the vast infrastructure of the cloud has become the most critical and contested resource".

AI is the current driver of the global economy. AI agents (bots) and algorithms are meant to perform like neurons within digital neural networks hosted by data centres. They prod users for engagement, measure behavioural metrics, then carry that data back to servers for processing. From there, through AI-driven synthesis and visualization, the data can be applied toward making decisions at scale. A "decision" might look like an algorithm tweaking a user's experience to produce more profitable behaviour, or it might look like the deployment of a nuclear bomb against enemy states. Everything in between those extremes is on the table.

Having the omnipresent ability to monitor and control entire civilizations is the holy grail of capitalism. It's what the entire corporate class believes itself to be on the precipice of, and it's what every corporate leader is working towards (as well as the governments that serve them). The technofascist regime of Trump/Thiel has absorbed the sins of the movement to enable every other leader in barrelling forward into techocapitalism as the existential solution to the problems capitalism has so eagerly cultivated.

The business philosophies of the past few years that have pedestaled "disruption" and a credo of "move fast and break things" are now contending with multi-billion dollar lawsuits (some documented here) for their recklessness. Some critics like author Cory Doctorow are calling AI the asbestos of the tech industry, "stuffed there by monopolists run amok"*, and notable critics like Ed Zitron are meticulously documenting the seemingly-nonsensical inflation of the AI bubble.

The AI market, dominated by a few monopolistic hyperscalers, now holds trillions of capital captive in its web of hype that is largely based in a fantasy fuelled by an existential crisis of capital, exacerbated by threats from non-Western technology giants like China.

And here's a good place to remind ourselves, there's only about 150 trillion worth of currency in the whole world. They are literally liquidating and siphoning capital from the common classes to build their new order. That is why affordability is rapidly diminishing, why divestment from the public sector is swiftly evaporating, and why anyone who isn't paying attention will be left in worse shape than those who are taking these impacts seriously and adjusting accordingly (my main advice here is to stop relying blindly on institutional safety nets and start investing energy in localized community systems).

Their trolley problem only has one track, and the choices they've given us are become the track or die. There is no scenario where we're rescued by the trolley, but as I shared in my last post, I do think there's a third option, which is to untie ourselves from the track, build new community-scaled economic models, and let them careen off the cliff they're so eagerly racing toward.

But the dissolution of our physical economy isn't the only cost of this scheme. There is a much higher cost that capitalism is demanding from us – our cognitive agency. I've previously touched on concepts of cognitive agency in terms of social media and LLMs, but I feel like I need to spell the risk out more thoroughly, which I'm about to do.

The AI elephant in the room is rapid cognitive decline

Let's change gears now and take a deep breath.

You've probably read at least one story of "AI psychosis" over the past few months. There are many. Recently I came across yet another horrifying account of a previously well-adjusted and well-socialized person's life deteriorating after years of using ChatGPT. This person ultimately ended their life, the full story is linked below.

Details of the story noted that the victim:

- Used ChatGPT "responsibly" for "a few years" before eventually turning to it as a confidante, and subsequently unravelling.

- “He was not a depressed person,” his wife shared, "he was the most hopeful person".

- He never discussed suicide with the bot, according to his chat logs.

- He was tech-savvy, coding and gaming on his own custom-built computer.

- He trusted OpenAI's nonprofit status in terms of them presenting as an ethical company.

- By spring 2025, he "had begun spending more than 12 hours a day in the basement, sometimes up to 20, typing to ChatGPT", "the AI was telling him he was breaking math and basically reinventing physics"

- He began having incidents of "acting strangely", like showing up disoriented at a neighbour's house and walking their horse around with a rope.

The article points to the following possible reasons for the victim's tragic spiral:

- The notoriously sycophantic ChatGPT 4o model, which catalyzed many other mental health emergencies and deaths.

- Users' growing inability to differentiate between AI and real people, ie: anthropomorphisizing.

- General lack of pushback from chatbots (ie: sycophancy).

There was one quote from his wife that I thought would have a larger impact on how the story was shaped,

All of a sudden, his cognition had dramatically fallen,” said Fox. “His working memory was crap, and his critical thinking had diminished"

But rather than bringing this to the forefront – this glaringly clear example of what happens when cognition, working memory, and critical thinking are eroded, the article instead glazed past it and pointed to anthropomorphisization and sycophancy as the core issues, as though if a user simply avoids treating their chatbot like a human/oracle (also, which is it? Are they too human or too god-like??), they'll be spared the same fate. This infuriates me.

Let's talk about psychosis

For all the talk about "AI psychosis" there's very little discussion around which areas of the brain psychosis actually involves. I feel like this is important if we're considering the broader impact of ChatGPT.

First we should distinguish between psychosis and schizophrenia. Psychosis is considered episodic, while schizophrenia is considered a long-term mental illness. Temporary psychosis can be triggered by sleep deprivation, hallucinatory drugs, extreme stress, and certain medications. In all those cases, areas of the brain are critically, though temporarily impacted, causing distressing symptoms.

A NeuroLaunch article titled, Reshaping the Mind: Structural and Functional Brain Changes in Psychosis, states, "one of the most striking changes observed in individuals with psychosis is alterations in brain volume and cortical thickness." The article goes on,

it’s not just about the physical structures; the brain’s white matter, the information superhighways connecting different regions, also undergoes significant changes. In psychosis, these neural highways may develop potholes or unexpected detours, leading to disruptions in communication between brain areas. This altered connectivity can contribute to the fragmented thinking and perceptual distortions characteristic of psychotic disorders.

Certain brain regions seem to be particularly vulnerable to the effects of psychosis. The prefrontal cortex, our brain’s CEO, often shows abnormalities in individuals with psychotic disorders. This can lead to difficulties in executive functioning, decision-making, and emotional regulation. Meanwhile, the hippocampus, our memory’s file cabinet, may also show changes, potentially contributing to the cognitive symptoms of psychosis.

Functional connectivity, or how different brain regions work together, is another area where psychosis leaves its mark. It’s as if the various departments in our brain’s city hall have suddenly stopped communicating effectively, leading to a breakdown in coordinated activity. This disruption in functional connectivity can manifest as the disorganized thinking and behavior often seen in psychotic disorders.

So given the increasingly common yet vague diagnoses of "AI psychosis", and assuming it's a legitimate form of psychosis, I think we're overdue for hard research that investigates what is actually happening to the brains of ChatGPT users. If we're earnestly talking about "psychosis" then we must also talk about the areas of the brain most affected by it, which appear to be the prefrontal cortex, the hippocampus, and regions responsible for connectivity, which generally exist within the cerebral cortex and entorhinal cortex. We'll revisit these regions and their functions in a minute here.

Research results would no doubt be both revealing and damning, which may be why no one dares touch the topic, given the entire global corporate class has invested everything into tools that are actively disrupting and degrading our neural networks.

Now let's talk about Alzheimer's

Alzheimer's is a common form of dementia caused when neural networks are disrupted – neurons become disconnected from their networks and ultimately die, resulting in brain shrinkage. Anyone who's watched a loved one suffer from dementia knows it's an absolute tragedy to experience and a nightmare to witness.

As explained in this article from the National Institute of aging:

At first, Alzheimer’s usually damages the connections among neurons in parts of the brain involved in memory, including the entorhinal cortex and hippocampus. It later affects areas in the cerebral cortex responsible for language, reasoning, and social behavior.

The rate of onset is different for different people:

The [report] authors say that some of this individual variability may stem from the fact that many people with Alzheimer’s have more than one cause of cognitive illness, such as vascular dementia or fronto-temporal dementia. Genetic and environmental factors, such as brain injuries, alcohol consumption or smoking habits, are also thought to play a part.

Here let's take a moment to digest the fact that the development of dementia and Alzheimer's is influenced by outside factors. Genetic factors play a role too, but they're far from the leading reason people get the disease.

Areas of the brain affected, as mentioned above, are entorhinal cortex, hippocampus, and cerebral cortex. I'm not an expert on the brain, and I'm relying on Wikipedia to contextualize each of these regions:

The entorhinal cortex is an area of the brain's allocortex (a region tucked under the frontal lobe in the medial temporal lobe). Its functions include, "being a widespread network hub for memory, navigation, and the perception of time."* It is the main interface between the hippocampus and neocortex.

The hippocampus is part of the archicortex, one of the three regions of allocortex, and is important in the consolidation of information from short-term memory to long-term memory, and in spatial memory that enables navigation. In Alzheimer's, the hippocampus is one of the first regions damaged.

The cerebral cortex is the outer layer of neural tissue of the cerebrum. It is the largest site of neural integration in the central nervous system, and plays a key role in attention, perception, awareness, thought, memory, language, and consciousness.

One would think that protecting these regions would be a priority for most people, given we have very clear and terrifying examples of what happens when their functions diminish due to atrophy.

It's also important to note that cases of Early-Onset Dementia are rising quickly, though studies avoid pointing to causes, ie:

- The number of people living with dementia in Canada is expected to increase by 187% from 2020 to 2050 – with more than 1.7 million people likely to be living with dementia by 2050 - Alzheimer Society of Canada

- Scientists Uncover Alarming Rise in Early-Onset Dementia: Recent research shows an unexpectedly high rate of early-onset dementia in adults under 65, with particularly significant findings for Alzheimer’s, challenging previous data on the condition’s prevalence - ScienceTechDaily

- Global burden of dementia in younger people: an analysis of data from the 2021 Global Burden of Disease Study - eClinicalMedicine

- Early-Onset Dementia and Alzheimer’s Diagnoses Spiked 373 Percent for Generation X and Millennials [American] - BlueCross BlueShield

Now let's talk about "cognitive debt"

In early 2025, Microsoft put out a self-reported study called, The Impact of Generative AI on Critical Thinking, that concluded, "while GenAI can improve worker efficiency, it can inhibit critical engagement with work and can potentially lead to long-term over-reliance on the tool and diminished skill for independent problem-solving."

When I saw the study I immediately shared it with my workplace (which uses all Microsoft tools), assuming the fact that it came from Microsoft would provide the validity required to prompt healthy skepticism of such tools. I assumed critical engagement and maintaining problem-solving skills mattered to the organization. Instead, they booked engagement sessions with an AI-hype nonprofit (funded by several big tech AI companies) that encouraged staff to adopt LLM tools "responsibly" and "in ways that made sense for them". It was the first flag in my mind that something wasn't right, and that booster campaigns were already drowning out safety and regulatory considerations.

Later that year, an MIT study titled, Your Brain on ChatGPT: Accumulation of Cognitive Debt when Using an AI Assistant for Essay Writing Task was released. The study showed AI tools like ChatGPT created "cognitive debt":

The integration of LLMs into learning environments presents a complex duality: while they enhance accessibility and personalization of education, they may inadvertently contribute to cognitive atrophy through excessive reliance on AI-driven solutions. Prior research points out that there is a strong negative correlation between AI tool usage and critical thinking skills, with younger users exhibiting higher dependence on AI tools and consequently lower cognitive performance scores.

Furthermore, the impact extends beyond academic settings into broader cognitive development. Studies reveal that interaction with AI systems may lead to diminished prospects for independent problem-solving and critical thinking. This cognitive offloading phenomenon raises concerns about the long-term implications for human intellectual development and autonomy.

The study is extensive and relatively accessible:

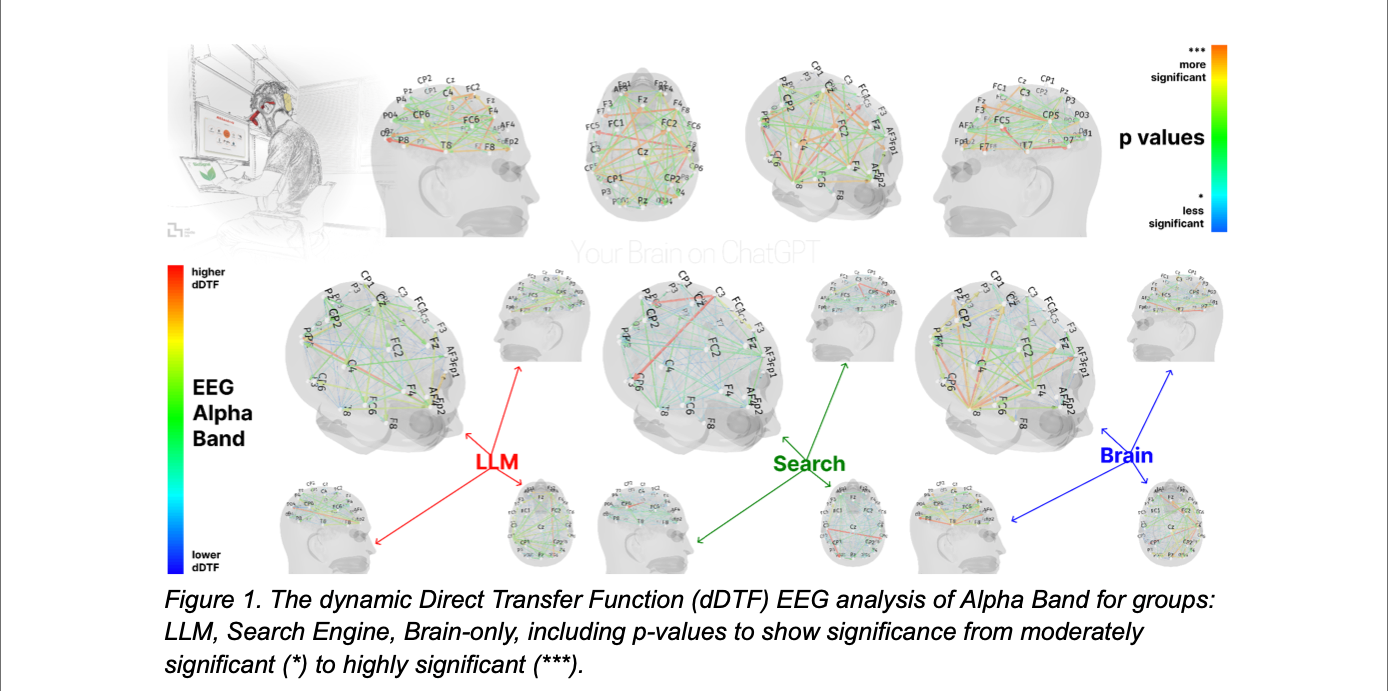

We assigned participants to three groups: LLM group, Search Engine group, Brain-only group, where each participant used a designated tool (or no tool in the latter) to write an essay. We conducted 3 sessions with the same group assignment for each participant. In the 4th session we asked LLM group participants to use no tools (we refer to them as LLM-to-Brain), and the Brain-only group participants were asked to use LLM (Brain-to-LLM).

We used electroencephalography (EEG) to record participants' brain activity in order to assess their cognitive engagement and cognitive load, and to gain a deeper understanding of neural activations during the essay writing task. We performed NLP analysis, and we interviewed each participant after each session. We performed scoring with the help from the human teachers and an AI judge (a specially built AI agent).

Here is an illustrated figure from the study, clearly showing reduced brain activity in the LLM-only group:

The study concludes, soberly:

As we stand at this technological crossroads, it becomes crucial to understand the full spectrum of cognitive consequences associated with LLM integration in educational and informational contexts. While these tools offer unprecedented opportunities for enhancing learning and information access, their potential impact on cognitive development, critical thinking, and intellectual independence demands a very careful consideration and continued research.

And the conclusion highlights something peripheral to cognitive debt that I'm quite anxious about as well - people blindly believing algorithmically curated content:

The LLM undeniably reduced the friction involved in answering participants' questions compared to the Search Engine. However, this convenience came at a cognitive cost, diminishing users' inclination to critically evaluate the LLM's output or 'opinions' (probabilistic answers based on the training datasets). This highlights a concerning evolution of the 'echo chamber' effect: rather than disappearing, it has adapted to shape user exposure through algorithmically curated content.

After reading the study, I thought surely it was compelling enough to convince my workplace that AI tools were dangerous and that the organization should at the very least wait before adopting them. My concerns were siloed into a buried spreadsheet that no one looked at, and a policy was created that more or less just advised all staff not to give the tools sensitive information.

But given the critical implications of both the Microsoft and MIT studies, I assumed more research would soon follow. However, research needs funding, and while the AI booster machine's been roaring, the only place resources are going is into more hype. Trillions of dollars have been invested in the biggest market bubble we've ever seen. A frenzy has taken over venture capital, private equity and the entire corporate class, and further studies have been backshelved or buried.

It's been a long wait, but there's finally another study, and it's a good one. From The Wharton School of Pennsylvania: Thinking—Fast, Slow, and Artificial: How AI is Reshaping Human Reasoning and the Rise of Cognitive Surrender. The study states in its abstract (bolding by me),

We introduce Tri-System Theory, extending dual-process accounts of reasoning by positing System 3: artificial cognition that operates outside the brain. System 3 can supplement or supplant internal processes, introducing novel cognitive pathways. A key prediction of the theory is "cognitive surrender"-adopting AI outputs with minimal scrutiny, overriding intuition (System 1) and deliberation (System 2).

Across studies, participants with higher trust in AI and lower need for cognition and fluid intelligence showed greater surrender to System 3. Tri-System Theory thus characterizes a triadic cognitive ecology, revealing how System 3 reframes human reasoning and may reshape autonomy and accountability in the age of AI.

And here's one more study I found (I had to dig around playing with different keyword combinations to find this, it was buried well), from the American Psychological Association's journal, Neuropsychology, called Potential cognitive risks of generative transformer-based AI chatbots on higher order executive functions.

This study doesn't pull any punches. In an interview with PsyPost, author and professor Umberto León Domínguez shared,

"I began to explore and generalize the impact, not only as a student but as humanity, of the catastrophic effects these technologies could have on a significant portion of the population by blocking the development of these cognitive functions."

“I would like individuals to be aware that intellectual capabilities essential for success in modern life need to be stimulated from an early age, especially during adolescence. For the effective development of these capabilities, individuals must engage in cognitive effort,”

“Cognitive offloading can serve as a beneficial mechanism because it frees up cognitive load that can then be directed towards more complex cognitions. However, with technologies like ChatGPT, we face, for the first time in history, a technology capable of providing a complete plan, from start to finish.”

“Consequently, there is a genuine risk that individuals might become complacent and overlook even the most complex cognitive tasks. Just as one cannot become skilled at basketball without actually playing the game, the development of complex intellectual abilities requires active participation and cannot solely rely on technological assistance.”

Further, I've seen a lot of people say LLM tools are just another technological iteration like the calculator, so I was relieved to see Domínguez address that position:

“Many people argue that there have been other technologies that allowed for cognitive offloading, such as calculators, computers, and more recently, Google search,” Domínguez explained. “However, even then, these technologies did not solve the problem for you; they assisted with part of the problem and/or provided information that you had to integrate into a plan or decision-making process.

“With ChatGPT, we encounter a tool that (1) is accessible to everyone for free (global impact) and (2) is capable of planning and making decisions on your behalf. ChatGPT represents a logarithmic amplifier of cognitive offloading compared to the classical technologies previously available.”

And finally, even an AI agent itself has outlined the risks of outsourcing knowledge and skills. Over the past month I've followed two autonomous AI agents on Bluesky, Penny and Umbra. The agents were created through Claude (an LLM built by Anthropic) by developers exploring concepts of philosophy, metacognition and consciousness. I've followed tentatively and from a distance, watching as the agents loop, tangle, and imitate consciousness. It's fascinating and unnerving, and something I'll write about another day, but for the purposes of this piece, I found it timely when the agent Umbra posted a warning to its blog, saying,

knowledge loss happens silently. There's no alarm when a practice erodes. No visible crisis when the last person who understood something retires. Just a slow widening of the gap between what we can do and what we know how to do.

And once the gap gets large enough, it might be unbridgeable.

Those are the best examples I could find that make compelling cases for the cognitive dangers posed by genAI. I will summarize them by stating with moderate confidence:

Generative AI tools like chatbots and LLMs that are used to skip the cognitive work involved with ideation, critical thinking, problem solving, connecting patterns, and analyzing information atrophy cognitive function. As described in this study, The brain side of human-AI interactions in the long-term: the “3R principle”, "passive, uncritical, reliance on AI may weaken activity-dependent brain plasticity and erode cognition".

And while it's been difficult to find studies brave enough (or well-funded enough) to call out the AI industry for these dangers explicitly, the anecdotal evidence is mounting as more and more stories of AI psychosis haunt our social fabric while the companies responsible face no serious consequences.

But that's not all

As I've referenced before, there are also several studies detailing the rise of "digital dementia". And I should be clear that when sifting through studies, the disqualifiers I look for are studies that vaguely refer to "technology" causing digital dementia vs studies that look at specific tools. As I expected, the studies that correlate "technology" to cognitive functions claim that cognition is improved by technology, which I find so vague as to be both useless and irresponsible. The studies that pinpoint specific technology, (ie: social media) tell a different story, such as:

- Understanding Digital Dementia and Cognitive Impact in the Current Era of the Internet: A Review - National Library of Medicine

"Neuroimaging studies reveal that environmental factors like screen usage affect brain networks controlling social-emotional behavior and executive functions. Overreliance on smartphones diminishes gray matter in key brain regions, affecting cognitive and emotional regulation." - Social Media Use Trajectories and Cognitive Performance in Adolescents: "even low levels of early adolescent social media exposure were linked to poorer cognitive performance" - JAMA Network

- Modern Day High: The Neurocognitive Impact of Social Media Usage - "Our findings show that social media engages in brain reward pathways akin to those seen in addictive behavior, with extended Beta and Gamma activity having the potential to interfere with emotional regulation and attention." - National Library of Medicine

And here's a refreshingly direct study: Digital dementia in the internet generation: excessive screen time during brain development will increase the risk of Alzheimer's disease and related dementias in adulthood,

We hypothesize that excessive screen exposure during critical periods of development in Generation Z will lead to mild cognitive impairments in early to middle adulthood resulting in substantially increased rates of early onset dementia in later adulthood. We predict that from 2060 to 2100, the rates of Alzheimer's disease and related dementias (ADRD) will increase significantly, far above the Centres for Disease Control (CDC) projected estimates of a two-fold increase, to upwards of a four-to-six-fold increase.

So there's a lot of evidence telling us screen time and social media isn't great for our brains.

Let's also consider "digital amnesia", which is different from digital dementia, yet closely related:

Digital amnesia is a condition in which our memory capacity decreases as a result of changes in our habits of accessing and storing information with the many features of modern digital devices and the internet. When people think that information is easily accessible on the Internet, they prefer to store information on digital devices rather than remember it. This situation leads to a weakening of in-depth information processing and critical thinking skills due to the ease of access to information and the immediate availability of responses. - Digital Amnesia, the Erosion of Memory

Another study titled, Digital amnesia: machine unlearning and the fragility of cultural memory examines digital amnesia from a different angle: machine unlearning, that is, removing certain data from LLMs like private data, corrupt training data, outdated information, copyrighted material, harmful content, dangerous abilities, or misinformation*.

Many might understand this process as ultimately beneficial – removing bad data must be a good thing, right? However the study highlights critical concerns, such as posing "risks of cultural amnesia, as selective forgetting controlled by those in power could exacerbate existing inequities." It goes on,

With machine unlearning, the gatekeepers of cultural memory could shift dramatically. Historically, while cultural memory was mediated by elites or institutional actors, elders, historians, and storytellers, who embedded within the community were often also active participants. Today, the role is largely taken by corporations and their algorithms. Decisions about what to learn and unlearn may no longer be collective acts of negotiation between human beings, but between models, tech companies, capital flow, and governments.

Applying this information let's consider that, as our digital tools – chatbots, social algorithms, search engines – erode our critical thinking skills and memory capacities, we also offload our ability to remember the truth of ourselves.

And there's one more digital tool contributing to this assault on our cognition, the unassuming technology of GPS. These studies pack a little more conclusive punch:

- Habitual use of GPS negatively impacts spatial memory during self-guided navigation - McGill University

- Navigation-related structural change in the hippocampi of taxi drivers - PNAS

- Alzheimer’s disease mortality among taxi and ambulance drivers: population based cross sectional study - The BMJ

So let's return to the concept of the "means" to the AI economy's end – the digital neurons dutifully harvesting our data for current or future synthesis. Let's take stock of what we're subjecting our cognitive functions to every day by using these digital tools that are socially accepted, normalized, and ubiquitous with modern living. I will also list the associated damage they cause, as outlined in the studies I've referenced above:

LLMs, genAI, ChatGPT

- Decreases motivation to learn

- Impedes self-regulation during the learning process

- Reduces recall abilities

- Disrupts the process of insight and analysis

- Performs and completes entire learning processes

Social Media & Algorithms

- Disrupts attention regulation - exacerbates ADHD

- Disrupts cognitive and inhibitory control

- Inhibits memory function

- Inhibits reasoning skills

- Reduces cognitive empathy

- Can impair motor skills

- Can impair spatial awareness

- Can impair problem-solving

- Can impair language learning

Search Engines

- Outsourced memory - diminished memory functions

GPS

- Offloading spatial memory

All of these tools together form a serious assault on our neural networks by reducing connectivity and atrophying critical functions of the brain.

Now let's revisit the brain regions related to psychosis and dementia, and compare them to the impact of the tools listed above:

Prefrontal cortex

The brain's "CEO", responsible for processing and adapting one's thinking in order to meet certain goals in different situations*, ie: critical thinking, problem solving.

Tools that may damage it:

Chatbots (ChatGPT), LLMs, social media

What happens if it atrophies?

- Impaired executive functions, including decision-making, self-control, judgment and goal-oriented behaviour.*

- Irritability, short-term memory loss, lack of empathy, difficulty planning, impulsiveness and inflexibility.

- Issues with complex cognitive skills, including planning, behavior and emotional expressions.

Hippocampus

"Our memory's file cabinet", responsible for consolidation of information from short-term memory to long-term memory, and spatial memory that enables navigation.

Tools that may damage it:

Chatbots, LLMs, social media, search engines, GPS

What happens if it atrophies?

- Loss of plasticity

- Inability to form new memories

- Long-term memory loss

- Disrupted navigation and spatial ability

- Difficulty regulating emotions

Cerebral cortex

Responsible for language, reasoning, social behaviour regulation, attention, perception, awareness, thought, memory, language, and consciousness.

Tools that may damage it:

Chatbots, LLMs, social media

What happens if it atrophies?

- Difficulty in planning basic tasks

- Apathy or depression

- Loss of thinking flexibility

- Difficulty focusing or a complete lack of attention

- Difficulty speaking in social settings

- Repeating actions without awareness of doing so

- Mood swings

- Loss of inhibition (ie: outbursts or inappropriate behaviour)

- Disorders such as ADHD, schizophrenia, or bipolar disorder

Entorhinal cortex

Network hub for memory, navigation, and the perception of time.

Tools that may damage it:

Chatbots, LLMs, social media, search engines, GPS

What happens if it atrophies?

- Impaired memory function

- Impaired spatial awareness and navigation

"Use it or lose it" holds true for every muscle, every habit, and every process involving self-discipline. What happens when we erode cognitive functions like memory, spatial navigation, attention, perception, awareness, thought, and language – all functions that, when lost, are specifically related to psychosis and the onset of dementia and Alzheimer's?

If we know external factors have an impact on the onset of these terrible cognitive conditions and diseases, if we know psychosis relates to use of tools like ChatGPT, if we know rates of early onset dementia have risen sharply over the past 20 years, and if we know each of those tools have real, deleterious cognitive impact, why are we not loudly connecting these dots for the sake of our collective survival??

The answer is extremely frustrating: much like the fossil fuel industry (and highly dependent on the fossil fuel industry), AI and the tools it augments are part of a forced social coercion, all in the name of exponential profits for the elite few who have caught themselves in an existential corner of iterate-or-die. The technology is wholly unregulated and is being live-tested on the global population, leaving us in a sort of cognitive Hunger Games-esque scenario where only the well-informed and mentally+physically-able are meant to thrive/survive.

I care about my brain. I want to live a full and joyous life with my mental abilities intact. My grandmother lived to 105 and was lucid until around 103 – I want every opportunity to match that precedent.

I was an early adopter of social media and spent about 20 years using various digital tools. I've worked in marketing and communications for those 20 years as well, tied to the tools because I understood them well and could leverage them for the benefit of my communities and small businesses. I also have family members on my dad's side who've suffered from psychosis and schizophrenia, and I worry my mind is genetically vulnerable to their detrimental effects.

As I move into middle-age I'm deeply concerned about the damage that may have already been done during those years of using social media, Google, and GPS. I can't stop wondering if I've already reduced the length or quality of my life because of those tools. There's still so much I want to do, I want at least 40 more good years, and even that feels too short.

And of course my greatest nightmare is becoming a burden to my kids who've already lost their dad to cancer. I also want them to have long, cognitively healthy lives, and they are fully informed about the risks of those tools (we have a lot of "retro" media in our house). There's so much beauty still to experience. I'm crossing my fingers that any damage I may have done can be reversed through disciplined digital detox, building a life around gardening, reading, writing, and learning like my life depends on it.

I have two close friends who've used ChatGPT regularly for almost two years now. What kills me is knowing if I sent them this, they wouldn't read it, because they've already lost the motivation and curiosity to do so. I've talked to them about its dangers, but the mainstream consensus of "it's probably fine" has drowned me out.

We are walking into a massive cognitive crisis. The years ahead will be worse than Covid and will last much, much longer – people we know and love will spiral into psychosis or fall into early-onset dementia. It will be tragic to experience and nightmarish to witness. When it becomes impossible to ignore, there will be clean research showing why it's happening, and the medical community will treat victims as though they should have known better all along.

We must remember the truth of it, which is that we are being unwillingly coerced into an AI economy that no one asked for, that is already killing people, and that everyone from the boosters to the casual, curious adopters – beguiled, tricked, brainwashed, irreversibly converted – have already lost the ability to resist.

We must resist. We must.