The ethos of grift has taken the nonprofit sector, and AI is its accelerator

Yesterday while watching a Stanford-sponsored, nonprofit-targeted presentation recommended by an executive-level director on "healthy cognitive offloading with AI", I had an anxiety attack. The presentation was AI-generated, full of errors, logical inconsistencies and blatant manipulation of reason. The presenter was taken at her word throughout the presentation, because she had written two whole books. Were the books about AI? No. Were they about cognitive science? Also no. They were about "happy healthy nonprofits", which clearly verified her as an authority on one of the most existentially sensitive technologies of our time.

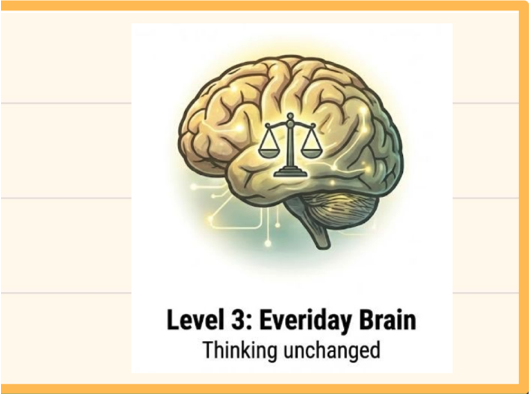

The presentation literally included a slide that read, "AI is the most powerful cognitive offloading tool we’ve ever had – and we love it!” and included a "prototype" (her words) graphic that ranked cognitive offloading in levels of one to five, advising the most "normal" place for a person to be was "level 3", which included using generative AI for prompts and first drafts, framed as "augmentation" rather than offloading.

This is a graphic from the presentation. I wish I was kidding:

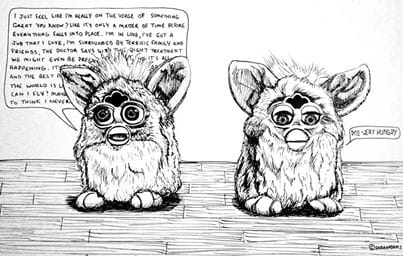

Friends, the whole presentation, from start to finish – the nonsensical concepts, the disjointed reasoning, the assertion of authority where none existed – was so overwhelmingly AI-generated the woman may as well have been a muppet. The experience of watching the presentation, which was, again, recommended by an executive-level director to an ENTIRE STAFF BODY, was surreal and disturbing on a level I have yet to articulate.

This could easily be a Part II to my post AI's cognitive crisis is being willfully ignored, because my god, the realization of how cooked we truly were washed over me like a tide I knew was coming but just hadn't quite accepted. People are getting dumber. It's happening faster than I thought it would.

But as I started writing I realized how effortlessly the "nonprofit + grifter ethos" pieces actually clicked together, and I think it merits exploration.

But before I dig in, let's just bookmark the following, because AI's cognitive crisis IS being very willfully ignored, and it's rather horrifying:

- If "AI" (whatever computer system that alludes to), cannot be conscious – fine. Let's agree it can't be. "AI", despite the hype, is a simply stochastic parrot. Got it. From there, it follows:

- A user who offloads cognitive capacity to such extent that they become dependent on the AI system, effectively becomes a stochastic parrot too, no? Surrendering organic cognition to artificial cognition includes surrendering the organic processes involved, replacing them with the artificial, "random", stochastic ones. Such systems "store" and "host", we're told they keep our cognitive processes safe and secure, are we not? (ie: the "healthy cognitive offloading" referenced in the presentation recommended to myself and an entire staff body)

So it follows... - The user effectively becomes an extension of the host intelligence. And whether or not you think AI could be "conscious", the user is still essentially surrendering their own consciousness, gradually at first, but inevitably following the addiction trajectory towards overwhelm, because AI is the most epistemic addiction that currently exists ("and we love it!" Like OH MY GOD).

Nonprofit is the next fertile frontier of Grift

Deep breaths. Jesus christ spelling this out is irritating.

Let's start with "Thought Leaders".

We're seeing "thought leadership" crop up all over LinkedIn and subsequently, nonprofit leaders are flocking to it like moths to light. Well-managed by the platform's algorithms, anyone in the white collar class who sees themselves as, or aspires to be, a "leader" is now hungry for the title of "Thought Leader". The validation and peer recognition are just too delicious.

And LinkedIn has iterated beyond simple "likes" – ho ho ho, go ahead and "like" a post, endearing gesture, adored by the staff classes, a simpler react evoking simpler times. But we're doing thoughts now, which anyone using the lightbulb react would know.

More lightbulbs = more valuable thoughts, and while the system is informal, it's very real. Eventually a user's thoughts are deemed valuable enough they become a Thought Leader, a title democratically bestowed and rightfully earned after months of dutifully crafted, "thoughtful" posts, often augmented by generated Substack articles and one or two self-published books (also generated). Entire webs of Professional Validation can now be effortlessly spun.

And because nonprofit frames every challenge as "opportunity", an entire class of brave AI adopters are wracking up credentials and stepping forward as consultants then sitting back and letting that sweet nonprofit dick roll in.

But the LinkedIn light of "thought leadership" is only the effervescent field that surrounds the burning core of "AI adoption". Leaders NEED TO KNOW how to adopt. EFFICIENCY. DELIVERABLES. DISRUPTION. GAME CHANGERS. SCALEABILITY - these concepts thrum steadily in the backgrounds of their day-to-day. WHAT MAKES A GOOD LEADER? YOU DO. YOU MAKE A GOOD LEADER. AND THIS IS THE WAY.

And I'll be real, I am fully aware of the value of novel thought. I can count several occasions over the past decade where my published thoughts have been poached by larger accounts and "influencers", notable example here – eventually it killed the fun of sharing them, and it's a big reason why I prefer laying low (while knowing everything I publish here is LLM content). Having my ideas coopted "for the good of the whole" also happens to me regularly in workplace environments. Does it kill my soul? Yes. Which is why I'm exiting the whole mess as soon as I can. If the inevitable path for my thoughts is capitalization, it will be me who controls how they're capitalized.

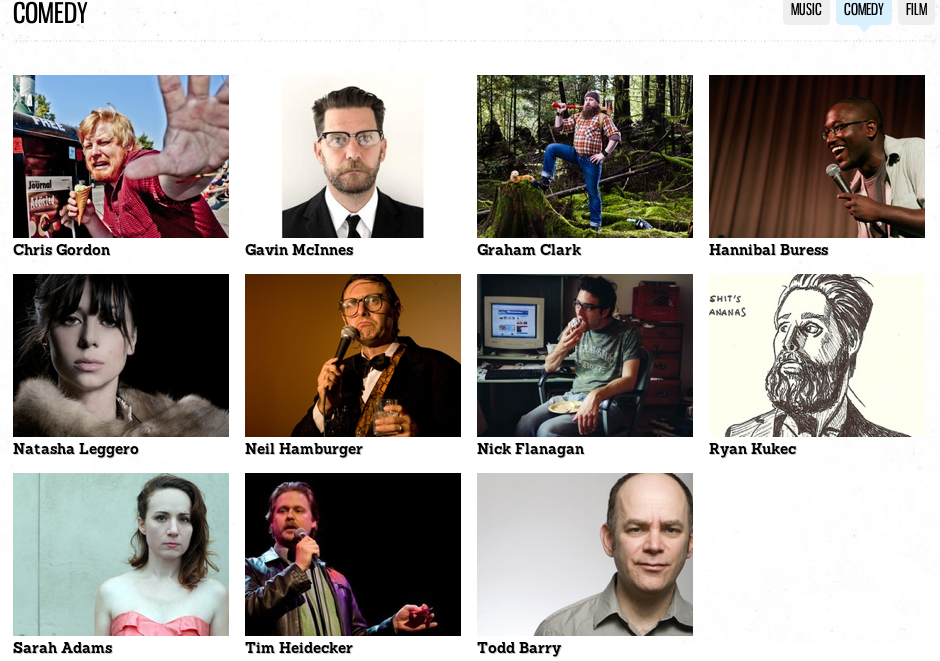

I was an early "content creator", before the term became a thing. I graduated art school in 2005 and got into comedy. I held influence and enjoyed cool opportunities. And when capital hijacked everything, when the markets inserted "influencers" and nepotism dominated culture, I retreated. I had kids to raise, and the trajectory of the content machine seemed clear enough to me. I knew how exploitable I was, and the ROI just wasn't worth it. I had a small chunk of debt-free capital after a retroactive lump payment of government benefits totalling $10k, the most money I'd ever had to my name at that point – which I used to buy an abandoned 5 acre homestead in the Southern Alberta prairies. I tried to fuck off but still had to make a living, which I achieved selling flowers. Then my coparent died and I had to return to a "stable" career with benefits, which was communications for nonprofit.

I can recognize the choices of my past as extraordinary. I know I stand out. But I'm glad I retreated when I did, as we can now clearly see where those "influencer" paths led most people; burnout, cancelled, stalked, doxxed, digital brainrot, rampant dysmorphia, prisoners to their brands. As CONTENT became the most valuable commodity of the 2010s and 2020s, a deep sense of exploitation settled over the arts. The marketization of Self encompassed everything.

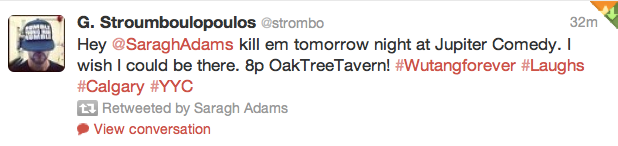

And here's proof I counted as a "somebody" for a little while:

Coming from that world, I feel like I got an advanced screening of what has now taken over the "normie" white collar class. AI is a siren song to noncreatives who couldn't participate in the past decades of monetized talent, but because genAI has already been hotly rejected by professional creatives, all that's left is the market of thought.

What is the "ethos of grift?"

It's a term I just made up – look at me go having novel thoughts! Too bad it's probably one of the least monetizable ones!

So what are the main qualities of a good grifter? I'm going to combine a few sources (using my own brain) for this list, but most of us can inherently define what a grifter is:

According to Finance Dictionary Pro a grifter brings:

- Sudden Friendliness: Sudden and excessive friendliness without apparent reason.

- Too Good to Be True Offers: Deals or opportunities that seem too advantageous to be legitimate.

- Urgency: Creating a false sense of urgency to pressure the victim into making hasty decisions.

Every AI sales tactic embodies those qualities.

And according to Words Defined, a grifter carries the following qualities:

- Charm and Charisma: Many successful grifters possess a compelling presence that draws people in. Their ability to establish rapport can make it easier to manipulate individuals.

- Cunning and Resourcefulness: Grifters are skilled at devising elaborate schemes and tactics for deception. They often adapt their methods to exploit specific vulnerabilities in their targets.

- Deceptive Communication: Effective grifters are adept at communication, often using persuasive language, half-truths, and confidence to gain trust and elicit compliance.

- Lack of Empathy: Many grifters show little regard for the harm they cause others. This lack of emotional connection allows them to exploit their victims without remorse.

Compare that with the characteristics of a thought leader (below) and you might quickly see how easily the two might be confused. We can't know whether someone is empathetic or not, but I can tell you with certainty that genAI is not.

The Market of Thought is the nonprofit sector's siren song

Let's return to the concept of "Thought Leaders".

According to Addicted 2 Success (sigh), these are the "10 Powerful Traits Every True Thought Leader Possesses", notes in parenthesis my own:

- Credibility and Trustworthiness (easy to conjure online)

- Vision and Innovation (easy to fake with AI)

- Strong Communication Skills (easy to offload with AI)

- Selflessness and Service (easy to signal online)

- Intellectual Curiosity (easy to fake with AI)

- Emotional Intelligence (easy to fake with wellness jargon)

- Resilience and Conviction (easy to fake overall)

- Creativity and Critical Thinking (easy to offload to AI)

- Community-Oriented (easy to signal online)

- Big Picture Thinkers (easy to fake with AI)

And you might be asking, "but what are the four A's of thought leadership????" Don't worry, Addicted 2 Success has you covered:

- Attitude: A positive, growth-oriented mindset and the courage to speak up.

- Aptitude: Deep expertise in a niche area that others recognize and respect.

- Abilities: Communication, problem-solving, and the ability to translate complex ideas simply.

- Awareness: A strong understanding of your industry, your audience, and the current landscape of ideas.

Again, AI easily mimics all of the above.

Yet the qualities above are essentially the Ten Commandments of nonprofit leadership. Every characteristic has been validated by the markets through monetized wellness pop psych and thoroughly integrated into nonprofit culture. Compare them to any organization's stated values, then cross reference with every virtue-signalling post celebrated dutifully by economically vulnerable audiences, and it all points back to the qualities listed above.

And every market demands competition. LinkedIn has marketized thought leadership, demanding efficiency and quantity above all else. Once a potential "leader" has tasted validation, the addiction takes hold. Engagement addiction is real enough that lawsuits positioning it as destructive are being won in landmark cases, but you won't see the algorithms boosting such news. Gee, wonder why.

Generative AI is the answer to every nonprofit leader's woes. The promise of productivity and efficiency at bargain prices is so alluring they legitimately see the risk of waiting and potentially missing the boat as more costly than hastily taking an external consultant's advice and spending tens of thousands of dollars per month on agentic services. But beyond that siren call, AI is the supervitamin to every nonprofit grifter who yearns for recognition and validation.

So now we have "thought leaders" like the person who made the presentation I saw, who was somehow hired by a reputable academic institution ("credibility and trustworthiness", remember) selling 90-minute slop presentations with such bold thoughts as, "AI is the most powerful cognitive offloading tool we’ve ever had – and we love it!”, including clips of Sam Altman – a person loudly called out by the business class for compulsively lying and known among many as a con man – talking about how he preserves his cognition by such revolutionary practices as writing down notes, followed by a slide encouraging employees to go for walks when they want to boost their cognition. When the topic of cognitive atrophy surfaced, she glazed past it with, “can we say for sure yet? Some of these are signals *shrug*, I have an existential crisis every day, but I still work with it”. She also noted some effects of AI could be "problematical", so, clearly she took its risks into heavy consideration.

The presentation tells us things like "discernment" can be offloaded to AI, however "judgement" must be handled by the human mind only. I'm sorry but, what the fuck is the difference between discernment and judgement? Jesus just thinking about that goddamn presentation is getting my heart rate up again. It was so. stupid.

Nonprofit is a sector defined by vulnerability

Every person who works in nonprofit has confronted narcissism among their leaders and coworkers. We've all known those people and many of us have been gaslit into enduring their abuse through doublespeak wellness jargon. Many such people, especially those who may have previously been restrained through hierarchal models, are now advancing their careers into Thought Leadership, largely thanks to AI.

The entire purpose of the sector is to fill the gaps left by governments allying with capital, and its workforce is populated by sensitive and wounded souls who just want to make a difference. Chaotic, overburdened workflows and low salaries are the costs employees bear for the greater good, and this raw hamster-wheel mode turns the entire sector into a mark for bad actors, which, thanks to AI, are almost impossible to tell from the good actors. This is a discernment call nonprofit is fundamentally incapable of making. But according to the presentation I saw, AI can handle such discernment for them now, and the answer will always be, "we love AI", so no worries there I guess!

Platforms like LinkedIn have "disrupted" the old hierarchal models and told everyone they can be a leader, all they need are Good Thoughts™. And because the frazzled sector is configured to defer credibility to "authority" and community clout – two characteristics easily imitated online, organizations are finding themselves in situations where 50-100 people at a time are being subjected to tactical AI sales pitches sanctified by "reputable" sources who are themselves funded and at the mercy of venture capital (which the entire global economy currently hinges on).

Nonprofit organizations are completely exposed – not just their systems (many have already granted full root permission access to agentic "IT security" firms), but to every AI-supercharged grifter. And even verified consultants with solid community track records are being lulled and homogenized by genAI, now advising nonprofit leaders to adapt, and how best to adapt, because it's very profitable for them (the consultants) to do so.

And once you see it happening, it can't be unseen. The machine of capital is not only consuming the nonprofit sector, but is in the early stages of consuming the cognitive capacity of every person working within the sector. AI is the most powerful cognitive offloading tool of our time, "and we love it!" Ugh.

But at least art has been freed, so I'll see you there soon

When I see a tsunami approaching I get off the beach. I love my brain. My brain is me. I love my life. My life is me. I think, therefore I am. And I am good! I want to preserve my life, my mind, my self!

I wonder how Descartes would react to a technology that literally deprives humans of their capacity to think. "I don't think, therefore I am... not" seems like the obvious take. We are our minds. That so many are so blasé about surrendering their ability to think to artificial intelligence – only the most rotten iteration of capitalism could convince anyone to surrender such a thing.

This adjusts the "consciousness" debate too (which exists whether we like it or not) – if the assertion is that AI cannot be conscious, does that not also imply a user would become LESS conscious in offloaded states?

If a user's ability to think has literally been offloaded to AI (or whatever computer system we're calling "AI"), which again, working in the nonprofit sector I can say, is happening, does that user not meet the criteria of a stochastic parrot as framed by Emily M. Bender, Timnit Gebru, Angelina McMillan-Major, and Margaret Mitchell? The criteria includes (as per Wikipedia):

- LLMs are limited by the data they are trained by and are simply stochastically repeating contents of datasets.

- Because they are just making up outputs based on training data, LLMs do not understand if they are saying something incorrect or inappropriate.

What happens when humans become the AI-supercharged vehicles for "dangers such as environmental and financial costs, inscrutability leading to unknown dangerous biases, and potential for deception", unable to "understand the concepts underlying what they learn"?

A user who's offloaded their functional cognition to AI essentially becomes a vessel for that intelligence, which, conscious or not, can be, and are obviously, driven by corporate interests. How are people just trusting these anti-human CEOs with their minds?? How long until people start involuntarily reciting ad scripts or dreaming of product placements? How long until a chatbot tells entire segments of users to enact unspeakable harms on others - framed as accidental, of course, just as many cases of AI psychosis already have been.

Why are we just accepting that a technology able to influence herd mentality, and that has already shown no care for human life, is a good and safe thing to give our minds to?

You guys this is insane. We are living in a cuckoo bananas timeline that is rapidly getting more cuckoo bananas.

Preserve your minds, please. There is no good outcome, in any timeline, for surrendering your ability to think. Regaining those abilities requires legitimate rehabilitation, that can only be prompted externally because you'll have lost the ability to recognize the loss. That's what Alzheimer's is for fuck's sake. It's a nightmare – why are we gambling with such an outcome??

And if you work in nonprofit, either loudly resist AI (which I do) or leave (which I am). You have the right to preserve your own mind, and imo, the normalized lull of nonprofit AI adoption is a very dangerous place to be right now.

Update: After publishing this, it occurred to me that, down the line, when early onset dementia can be directly correlated to LLM offloading (which it will be, since social media already has been and AI brings those effects on steroids), it's likely there will be a ton of lawsuits against workplaces, clearly showing the workplace mandated or encouraged LLM adoption.

Employees should probably be documenting such adoption policies just in case. Most workplaces haven't even conceived of such an outcome, but the probability is extremely high.

If a person develops early onset dementia in the next couple decades, and it can be directly linked to LLM dependence, and if they have proof their workplace mandated or strongly encouraged adoption which led to their dependence, that workplace would be liable. And I guarantee no workplace currently has liability measures in place for such situations.

Like, right now it's honestly like a workplace saying, "hey everyone, we're going to start using heroin now, it's highly addictive and has ruined many lives, but apparently it feels great," and then hosting all-staff lunch 'n' learns on the different ways staff can integrate heroin into their workflows. Ten years later the substance is proven to be wildly destructive, plus this version of heroin also causes Alzheimer's, so it isn't just addiction they're liable for, it's a human being's loss of function.

So yeah, if you've been mandated or encouraged to adopt AI in your workplace, keep tight records on all those instances and adoption/integration policies. Hell do it even if you never use LLMs, just to help your coworkers get justice if the time comes. Their kids might need that payout.

Update 2 (in the same night!): Hey LinkedIn die hards, check this out:

"Because LinkedIn knows each visitor’s name, employer, and job title, every detected extension is matched to an identified individual. And because LinkedIn knows where each user works, these individual scans aggregate into detailed profiles of companies, institutions, and government agencies, revealing which software tools their employees use without the organization’s knowledge or consent."

"The malicious JavaScript that Microsoft secretly injects into the LinkedIn website searches each user’s browser for installed software applications.

The search reveals:

Political opinions of users, through extensions like “Anti-woke,” “Anti-Zionist Tag,” and “No more Musk”

Religious beliefs, through extensions like “PordaAI” (blur haram content) and “Deen Shield” (blocks haram sites)

Disability and neurodivergence, through extensions like “simplify” (for neurodivergent users)

Employment status, through 509 job search extensions that reveal who is looking for work on the very platform where their current employer can see their profile

Trade secrets of millions of companies, by mapping which organizations use which competitor products, from Apollo to ZoomInfo

LinkedIn has not disclosed this practice in its privacy policy. There is no mention of extension scanning in any public-facing document."